Why to have even average reviews

Aleš Randa

10 min

There is a huge amount of highly negative or positive reviews on the internet, but hardly any average reviews. Why is this? In our search for an answer, we found a problem and an opportunity in one. Find out more!

The aim of online reviews is to get honest and unbiased feedback from customers

That's the only way the resulting texts will help other shoppers, who won't have to wonder if another calculating marketer is trying to trick them. They will take a sigh of relief and consider any testimonial as the advice of a good friend.

As a result, review marketing helps brands fight the deep crisis of trust. Crisis is currently afflicting virtually all businesses around the world.

.png?width=750&height=91&name=SK%20(45).png)

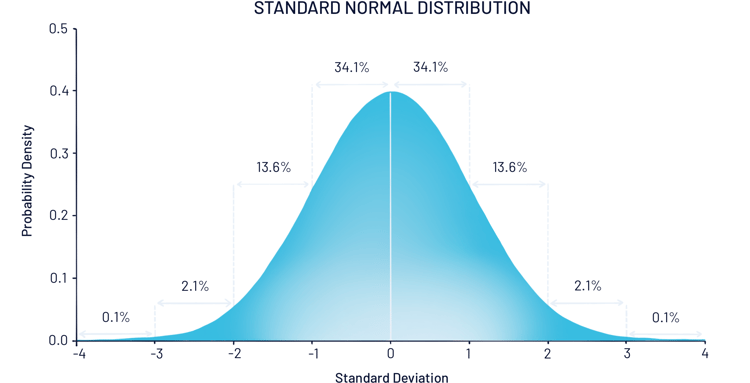

If you plotted the ratings of all the products in the world on a graph, it would be approximately normally distributed

In fact, many people will agree on an average value, most will circle around it. Only a very small number will go to the extremes (less than 5%). Graphically, in an ideal review world, it would look like this:

This is what a normal data layout looks like in practice. The x-axis shows the values (for example the number of stars), the y-axis shows their frequencies. You can see that most of the data is typically around the middle.

This is what a normal data layout looks like in practice. The x-axis shows the values (for example the number of stars), the y-axis shows their frequencies. You can see that most of the data is typically around the middle.

Source: What is a Normal Distribution in Statistics? • RPP Baseball (rocklandpeakperformance.com)

The assumption of a normal distribution of reviews is so strong that for a long time no one bothered to test it. It was only a few years ago that a team of researchers from American and Chinese universities got around to it: they took a sample of three sales categories from the US sales portal Amazon, pulled thousands of reviews and calculated normal descriptive statistics on them.

Unfortunately, the results blew the authors away.

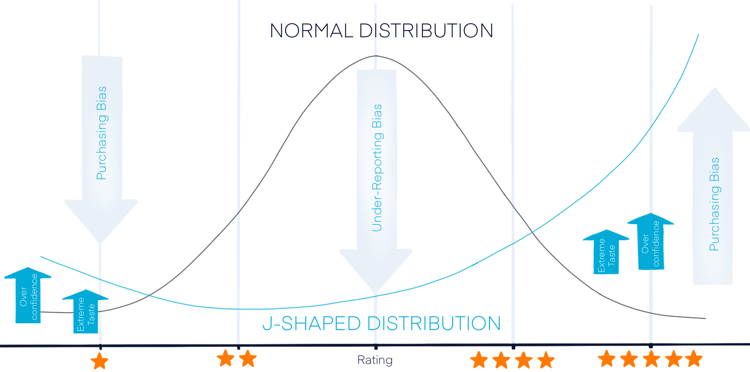

They were all wrong, review scores have a J-shaped distribution, and review marketing may have a serious problem

The term "J-shaped distribution" refers to a distribution in which you find very few low values, almost no medium values, and a disproportionately large number of high values. That's exactly the shape the authors found. 72% of product reviews had at least 4 stars, the rest were at the negative extreme, and there was almost nothing in the middle.

.png?width=750&height=409&name=Graf_2%20(1).png)

Compared to the normal distribution, this has more extreme cases to J at both ends and practically no medium values.

Source: J Shaped Distribution - Statistics How To

Why is this distribution of ratings problematic?

Because it contradicts both theory and simple intuition.

Do most brands really have an absolutely great range, and only the minimum are a bit of a "flop"? According to the statistics, certainly not. Out of the 30,000 new products that see the light of day every year, 95% will fail.

The remaining 5% of brands will make it, but due to various (and often random) factors, their performance will be uneven. A few will rise to the top of the industry, some will fail, but most will perform around the 'golden mean'. In other words, their performance must be approximately normally distributed.

Why is this not reflected in the reviews? And why is the neutral experience almost entirely absent from the listings, where you can find both positive and negative reviews of the products?

Scientists offer an elegant but worrying explanation

According to them, the results of reviews do not reflect reality and are constantly distorted in practice by two phenomena: purchase bias and underreporting bias.

Purchase Bias

Purchase Bias is based on the fact that people who have a positive perception of a product are significantly more likely to convert than those who are hostile to it from the outset.

- Therefore, disproportionately more "fans" will purchase the product, which inevitably leads to higher ratings, regardless of the product's actual features. And even if positive people are a little disappointed by the item, they tend to give it a higher rating again - due to the psychological phenomenon of cognitive dissonance.

- The inevitable result of this interplay of factors is inflated rating averages.

Cognitive dissonance occurs when new information strongly contradicts our experienced values or beliefs. People often react to this situation by recklessly sticking to their original beliefs. Even if it requires them to adjust reality slightly. In reviews, this can lead to unrealistically high ratings for products that would not otherwise deserve them.

Underreporting bias

The underreporting bias says that people with extreme views are much more likely to brag or complain.

- This is also known as the "brag or moan effect". It explains why there are disproportionately more negative/positive reviews on the internet than neutral ones.

- People who have a very good or very bad experience are simply more likely to talk about it. On the other hand, people with a normal user experience tend to remain silent.

The cumulative detrimental effect of both biases is shown in the graph below:

In this graph you can see how both biases distort the distribution of ratings, which should otherwise be normally distributed (indicated by the red line). The buying bias increases the number of scores at both extremes, the underreporting bias is behind the disappearance of the middle values.

In this graph you can see how both biases distort the distribution of ratings, which should otherwise be normally distributed (indicated by the red line). The buying bias increases the number of scores at both extremes, the underreporting bias is behind the disappearance of the middle values.

Source : Overcoming the J-shaped distribution of product reviews | Communications of the ACM

Both bias and J-rating distributions are dangerous for review marketing and require action

Because they can easily cause you to lose the benefits that reviews bring to sellers.

- People don't trust perfect reviews. In fact, up to 82% of them will specifically look for negative reviews, and if they don't find any, up to 95% of them will suspect you of fraud.

- Research shows that the most trustworthy reviews contain a mix of ratings from 1 to 5 stars, with the most desirable average rating being between 4.2 and 4.5

- You won't learn anything from outliers. They will either be too negative or naively enthusiastic. Imagine you're an intern starting out in a company - will you learn more from a boss who either praises you to the skies or just puts you down? Or do you get more valuable feedback from balanced, constructive criticism? At our company, we take the second option - and the same goes for reviews

- Without valuable information from reviews, you have nothing from which to create quality UGC content. That's what we've been telling you in all of our recent articles, as it's one of the most powerful marketing tools available today. It has a proven impact on conversions, impressive ROI and outperforms traditional methods on all counts when it comes to credibility. It can be used in advertising, emailing and product development itself.

- You can't even use biased reviews to predict sales. That's because the equations use the average of the ratings as a predictor, but in a sample with outliers, that's biased and therefore useless. Trying to predict anything from it would be misguided and have less predictive value than a coin flip.

What can protect you from bias? Part 2 of the study offers a solution

In this part, the authors decided to conduct an experiment. They created imaginary duplicates of Amazon products that they had previously reviewed, and had them reviewed by a new sample of independent reviewers. In this case, the results of the reviews were unimodal and roughly normal - much closer to the theoretical assumptions. The resulting average was also significantly lower.

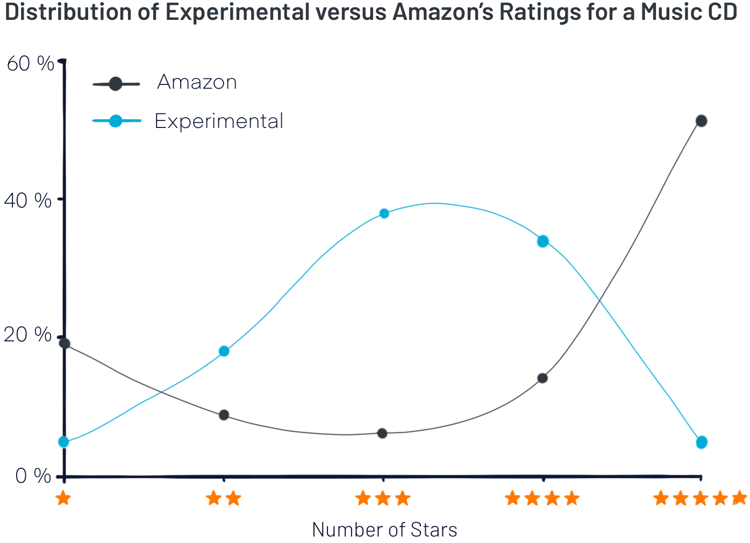

All this is clearly shown in the graph below:

Here you can see how the distribution of the number of stars differs for the same products rated by either conventional (purple line) or independent (blue line) testers. Only the rating of the experimental group resists the aforementioned biases and corresponds to the theoretical assumptions.

Here you can see how the distribution of the number of stars differs for the same products rated by either conventional (purple line) or independent (blue line) testers. Only the rating of the experimental group resists the aforementioned biases and corresponds to the theoretical assumptions.

Source: Overcoming the J-shaped distribution of product reviews | Communications of the ACM

Want to protect yourself from bias? Consider the following factors

- You must only collect reviews from people who are unbiased about the products.

- Reviewers should be actively encouraged to write long reviews full of pros and cons

- Positive negativity is also desirable. This can look like this: "The hotel is in a nice location, with friendly service and excellent food. However, we were very disappointed with the distance to the beach, it was quite a walk with small children."

- People read reviews to find out the pros and cons, to assess whether they can cope with them. That's why they need reviews that don't shy away from constructive criticism. For example, in our model review above, everyone will make sure the hotel has good service, families will consider the distance to the beach, singles are unlikely to worry about it.

- Product reviewers need to be people from the relevant target group who have enough know-how to know what to look for. Only these 'people experts' can be analytical in their testing and not so easily swayed by simple emotions.

- Last but not least, you need to encourage reviewers to create photo and video content to support their experience or illustrate important details.

How do you put the tips into practice? At Brand Testing Club , we've turned them into a complete business plan.

It took us several years to come up with the solution, we read hundreds of statistics and studies and used the knowledge we gained to fine-tune every detail. The result is a review collection method that helps brands across Central Europe.

A typical testing with us looks like this:

- Based on your target audience, we will select the right testers from our club. You will meet thousands of people who are passionate about technology, fitness, cosmetics or even children's supplements. They all enjoy discovering new things and helping others make better buying decisions.

- Selected testers participate in each testing challenge with complete impartiality. They are rewarded for leaving a positive or negative review, and they always have enough time to test all the important aspects of your product range. All these factors ensure that the resulting reviews are highly protected from both purchase bias and cognitive dissonance.

- Testers take photos or videos of the testing process and then post everything to their social networks. This provides valuable content and off-site SEO support.

- The neutrality of the testers also minimises underreporting bias. Their goal is to thoroughly examine the product from every possible angle, and we actively encourage them to write long, informative reviews. They enjoy their work as much as we do. As a result, the return on investment is close to 100%.

- In addition to the testing itself, we organize the logistics of transporting the products to and from the testers. We will also prepare the final evaluation, with all the necessary statistics.

- Finally, we will help you place the reviews on all important review sites, where they will be as visible as possible.

.png?width=750&height=91&name=SK%20(47).png)

Key takeaway: J-split could jeopardise review marketing

If there's one thing you can take away from today's article, it's follwoing. Nothing beats testing products with unbiased, dedicated testers. They are the only ones who can keep out all the harmful biases of thought that affect the evaluation of ordinary customers and cause all the problems mentioned in today's article.

Your brand needs good, bad and average reviews that keep the overall rating around the ideal 4.5 stars and give all customers enough valuable information to make a truly safe and confident purchase. Testing with us will give you exactly that, and make a lot of the work around it easier.

However, if you do decide to collect reviews from ordinary shoppers, make sure they understand the following principles:

- You want independent opinions, which can be both positive and negative.

- You want to hear absolutely everything. So that you can improve the product and let others know what they are buying.

- It's great to include a photo or video with the review. Thanks to that you can illustrate the problem or benefit mentioned in the text.

We also recommend that you regularly monitor the layout of your reviews and take immediate action if they go too far. While it's easy to fall into the illusion that this is your great service, in complex businesses you're never perfect and falling into the illusion could cost you dearly